Client Ghosting After Shortlists? Here's Why AI Scoring Changes the Conversation

Struggling with client ghosting after shortlists? Discover how AI scoring improves hiring decisions, builds trust, and drives faster client responses with data-driven candidate evaluation.

Table of Contents

Introduction

You've done the work. You've sourced aggressively, screened meticulously, and presented what you believe is a strong, qualified shortlist. You hit send, wait for feedback… and then… silence.

Days pass. You follow up. Still nothing. You try again. Radio silence. This isn't just frustrating-it's demoralizing, costly, and increasingly common.

Client ghosting after shortlist submission isn't rare; it's a pervasive pain point that erodes trust, wastes recruiter time, and damages agency profitability.

But what if the problem isn't just that your client is unresponsive-or even that your shortlist isn't good enough? What if the real issue lies in how the shortlist is presented?

Traditional shortlists often lack the clarity, objectivity, and actionable insight needed to prompt a timely, meaningful response. They leave clients to interpret raw data through their own biases, leading to confusion, delay, or disengagement.

This is where AI scoring-when implemented thoughtfully-doesn't just automate a task; it fundamentally transforms the recruiter-client conversation. By replacing subjective, opaque evaluations with transparent, data-driven insights, AI scoring gives clients a clear, defensible framework for evaluating candidates.

It turns the shortlist from a list of names into a structured decision-making tool that reduces ambiguity, builds trust, and makes it significantly harder for clients to disengage without consequence.

In this article, we'll dissect why clients ghost shortlists, explain how AI scoring addresses the root causes of silence, and provide a practical, step-by blueprint for implementing AI-powered scoring that doesn't just improve efficiency-it changes the nature of the conversation, making ghosting less likely and meaningful engagement more probable.

1. The Shortlist Lacks Clarity and Actionable Insight

The most common reason for client silence is not disinterest-it's confusion or uncertainty about what to do next.

- The Problem: Traditional shortlists often consist of little more than a list of names, resumes, and maybe a recruiter's subjective note like "Strong candidate" or "Good fit." They don't clearly answer the client's core questions: How well does this candidate match what we actually need? Where are the strengths and gaps? How do they compare to each other?

- The Consequence: The client is left to do the mental work of comparing resumes, interpreting vague notes, and trying to infer fit-all while juggling other priorities. If the shortlist doesn't make the next step obvious (e.g., "These two are clear front-runners; here's why"), it's easy to put off reviewing it-especially if the client is busy, unsure, or lacks confidence in their ability to evaluate talent objectively.

- The Result: The shortlist sits in the inbox, awaiting "when I have time"-which, for many busy hiring managers, never comes.

2. The Evaluation Feels Subjective and Untrustworthy

Clients, especially those who have been burned by bad hires before, are often skeptical of recruiter judgments.

- The Problem: If the shortlist relies heavily on the recruiter's gut feeling ("I just had a good feeling about this one") or vague praise ("She's really sharp"), it triggers skepticism. Clients wonder: Is this based on actual evidence, or is it just the recruiter's bias? Did they favor someone because they went to the same school? Are they pushing a candidate because it's easy to place, not because it's the best fit?

- The Consequence: This lack of trust leads to paralysis. The client doesn't know whether to believe the assessment, so they delay making a decision-or worse, they ignore the shortlist entirely and start their own search, feeling they can't rely on the agency's judgment.

- The Result: Silence isn't disinterest-it's a lack of confidence in the process. The client ghosts because they don't feel equipped to act on the information provided.

3. There's No Clear "Next Step" or Decision Framework

Even if the client reviews the shortlist, they may not know how to proceed.

- The Problem: Traditional shortlists rarely include a recommended path forward. They don't answer: *Should we interview all of these? Are there clear top candidates? Where should we focus our interview time? What questions should we ask to probe specific gaps or strengths?

- The Consequence: Without a clear call to action, the client is left in a state of decision fatigue. They know they need to do something, but they're not sure what-and the effort required to figure it out feels disproportionate to the perceived benefit, especially if they're unsure of the shortlist's quality.

- The Result: The task gets postponed indefinitely. It's not that they don't care-it's that they don't know how to engage constructively.

4. The Shortlist Doesn't Address Unspoken Concerns or Biases

Clients often have hidden worries or assumptions that a traditional shortlist doesn't alleviate.

- The Problem: The client might be thinking: Are these candidates really available, or will they ghost us too? Do they actually have the skills they claim, or are they exaggerating? Is the recruiter just sending me whoever is easiest to place, not whoever is best? These concerns are rarely voiced directly but heavily influence whether the client feels motivated to engage.

- The Consequence: If the shortlist doesn't proactively address these unspoken questions-through objective validation, transparency about process, or evidence of skill-then the client's skepticism remains, and engagement stalls.

- The Result: The client disengages not because they dislike the candidates, but because they don't trust that the shortlist represents a genuine, low-risk opportunity to find a good fit.

5. The Recruiter-Agency Relationship Lacks Transparency and Partnership Vibe

Sometimes, the ghosting isn't about the shortlist at all-it's about the broader dynamic.

- The Problem: If the client feels like they're just a ticket in the agency's queue, or if past interactions have been transactional and opaque, they may not feel invested in responding. They see the agency as a vendor, not a partner.

- The Consequence: When the shortlist arrives, there's no sense of shared purpose or mutual accountability. It feels like another task being dumped on them-not a collaborative step in a joint mission to find great talent.

- The Result: The client disengages because they don't feel like they're part of a team working toward a common goal. Silence becomes a passive way to opt out of a relationship that doesn't feel reciprocal or valuable.

How AI Scoring Changes the Game: From Subjective Lists to Objective Decision Tools

AI scoring-when implemented as part of a structured, transparent screening process-doesn't just automate evaluation; it fundamentally alters the recruiter-client interaction by introducing clarity, objectivity, and actionable insight. It turns the shortlist from a passive report into an active decision-making catalyst.

1. AI Scoring Provides Clear, Comparable Metrics (Eliminating Guesswork)

Instead of relying on vague notes like "strong candidate" or "good technical skills," AI scoring delivers quantifiable, standardized measures of fit.

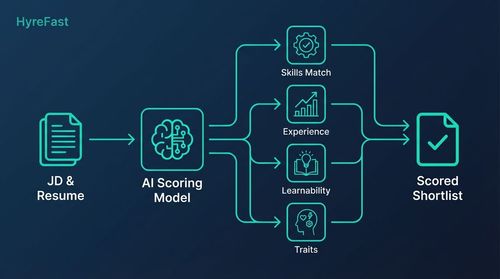

- How It Works: AI models (using NLP, semantic matching, or skill inference) analyze resumes, profiles, and assessment data to generate scores based on predefined, job-relevant criteria. These might include:

- Skills Match Score: How closely the candidate's demonstrated skills align with the job's requirements (e.g., 85/100 for AWS expertise).

- Experience Relevance Score: How pertinent the candidate's past roles and projects are to the specific tasks of this job.

- Learnability/Potential Score: An inference of how quickly the candidate can acquire new skills (based on career trajectory, project complexity, or learning agility indicators).

- Behavioral Trait Scores: (If using structured assessments) Scores on traits like problem-solving, communication, or resilience from validated tests or SJTs.

- Overall Fit Score: A composite or weighted score that summarizes the candidate's alignment with the role's success profile.

- The Impact on Client Engagement:

- Reduces Ambiguity: The client can instantly see, at a glance, how each candidate stacks up on key dimensions-no need to interpret vague recruiter notes.

- Enables Comparison: Scores allow for easy, objective comparison between candidates ("Candidate A: 82 skills match, Candidate B: 76 skills match-but B has higher learnability").

- Builds Trust Through Transparency: When the scoring methodology is shared (e.g., "Our AI model evaluates skills match by comparing resume content to the job description using semantic similarity"), the client sees that the evaluation is based on a consistent, defensible method-not just opinion.

- Makes the Next Step Obvious: If two candidates clearly score 85+ on skills match while others are below 60, the client knows where to focus interview time-reducing the effort required to engage.

2. AI Scoring Introduces Objectivity and Reduces Perceived Bias (Building Trust)

By introducing standardized, data-driven evaluation, AI scoring helps counteract the perception that shortlists are based on recruiter bias or favoritism.

- How It Works: When candidates are scored against the same criteria using the same model, the evaluation becomes inherently more consistent and less susceptible to individual recruiter moods, affinities, or unconscious biases (e.g., favoring candidates from certain schools or backgrounds).

- The Impact on Client Engagement:

- Addresses Skepticism Head-On: Clients who have been burned by bad hires or biased assessments are more likely to trust a process that shows its work. Seeing that Candidate X scored 78 on skills match because their resume demonstrated specific, verifiable experience with Docker and Kubernetes is more convincing than being told they're "strong on cloud."

- Shifts the Conversation from "Do I Believe This?" to "What Does This Mean?": The client's energy moves from questioning the recruiter's judgment to interpreting the results and deciding on next steps-a far more productive and engaging use of their time.

- Provides a Basis for Discussion: If the client disagrees with a score (e.g., "I think this candidate's SQL skills are stronger than the score shows"), it creates a clear, specific point for dialogue: "Let's look at the resume together-where do you see the SQL experience that the model might have missed?" This turns potential conflict into collaborative refinement.

- Reduces the Perception of Arbitrariness: When the client sees that all candidates are evaluated on the same scale using the same method, it becomes harder to believe the shortlist is random or manipulated.

3. AI Scoring Enables Actionable Insights and Recommended Next Steps (Reducing Decision Fatigue)

Smart agencies don't just dump scores on the client-they use them to generate clear, actionable guidance.

- How It Works: Based on the AI scores and the role's success profile, the system (or the recruiter using the scores as input) can generate insights like:

- Clear Tiering: "Based on skills match scores, Candidates A and B are strong Tier 1 fits (80+), Candidate C is a Tier 2 potential fit (65-79) with high learnability, and Candidates D-F are below threshold."

- Gap Analysis: "Candidate A shows strong AWS skills (90) but lower exposure to infrastructure-as-code (65)-suggesting a focus area for the technical interview."

- Interview Focus Areas: "To validate the AI's assessment, we recommend asking Candidate B about their experience migrating legacy systems to the cloud and their approach to cost optimization."

- Recommended Action: "We suggest moving forward with Candidates A and B for technical interviews, and using Candidate C as a backup if A or B withdraw."

- The Impact on Client Engagement:

- Reduces the Cognitive Load: The client doesn't have to sift through raw data to figure out what's important-the system highlights the key takeaways.

- Makes Engagement Low-Effort and High-Reward: A short, insight-driven summary is far more likely to be read and acted upon than a dense packet of resumes.

- Creates a Clear Call to Action: Phrases like "We recommend interviewing A and B" or "Focus the technical interview on X and Y" give the client an obvious, easy next step-reducing the temptation to procrastinate.

- Turns the Shortlist into a Tool for Collaboration: The insights become a starting point for a discussion: "I see you scored Candidate C lower on skills match-what makes you think they might still be a strong fit?" This fosters partnership, not just transmission.

4. AI Scoring Addresses Unspoken Concerns Through Transparency and Validation (Reducing Skepticism)

By making the evaluation process visible and verifiable, AI scoring helps alleviate the client's hidden doubts.

- How It Works: Agencies can use AI scoring to:

- Show the Work: Provide visibility into why a candidate received a certain score (e.g., "Skills match score of 82 based on strong semantic similarity to JD keywords: 'AWS EC2', 'S3', 'IAM', 'CloudFormation').

- Validate with Data: Point to specific evidence in the resume or assessment that supports the score (e.g., "The score reflects the candidate's role as 'AWS Solutions Architect' at XYZ Corp, where they designed and deployed multi-region serverless applications").

- Combine with Human Judgment: Use AI scores as a starting point, then add recruiter insights based on structured interviews or work samples (e.g., "AI skills match: 80. Recruiter note: Candidate demonstrated deep understanding of cost optimization strategies in the technical screen").

- Offer Blind or Anonymized Views (If Appropriate): For early-stage screening, share scores and redacted profiles to focus purely on merit-addressing concerns about bias based on name, gender, or educational pedigree.

- The Impact on Client Engagement:

- Builds Credibility Through Transparency: When the client can see the reasoning behind the score, they feel less like they're being asked to take a leap of faith.

- Reduces Fear of Hidden Flaws: Knowing that the score is based on specific, verifiable criteria (not just a gut feeling) alleviates worries about candidates exaggerating their skills.

- Shifts Focus to Substance: The conversation moves from "Do I trust this?" to "What does this tell us about the candidate's actual capabilities?"-a far more productive exchange.

- Makes Ghosting Feel Like a Missed Opportunity: When the client sees that the shortlist is based on a clear, transparent, and defensible process, ignoring it feels less like a harmless delay and more like a potentially costly oversight.

5. AI Scoring Fosters a Partnership Dynamic by Demonstrating Rigor and Value

By introducing a layer of intelligent, transparent evaluation, AI scoring signals to the client that the agency is not just a resume courier-it's a strategic partner committed to quality and rigor.

- How It Works: The agency positions AI scoring not as a black box, but as a tool that enhances its expertise:

- "We use AI to objectively assess skills match and surface high-potential candidates-freeing our recruiters to focus on what they do best: evaluating motivation, cultural fit, and providing strategic counsel."

- "The AI score is one input into our holistic evaluation-we combine it with structured interview insights and work samples to give you a complete picture."

- "Here's how we ensure the AI is fair and accurate: we regularly audit its scores against hiring manager feedback and placement outcomes."

- The Impact on Client Engagement:

- Shifts the Perception from Vendor to Partner: The client sees the agency as investing in rigor and transparency-not just trying to make a quick placement.

- Increases the Perceived Value of the Service: When the client understands that they're getting a data-informed, transparent evaluation-not just a list of names-they're more likely to view the agency as indispensable.

- Creates a Sense of Shared Purpose: The scoring process becomes a collaborative tool: "Let's look at these scores together and see what they tell us about the candidate pool."

- Makes Ghosting Feel Like a Breach of Trust: When the client recognizes the agency's effort to provide a clear, objective, and helpful evaluation, ignoring it feels less like a passive oversight and more like a disregard for the partnership.

Implementing AI Scoring to Stop the Ghosting: A Practical, Step-by-Step Blueprint

You don't need to overhaul your entire process overnight. Start where the pain is greatest-typically the lack of clarity and trust in the shortlist-and build momentum.

Phase 0: Diagnose Your Specific Ghosting Triggers (Week 1)

- Analyze Past Ghosting Incidents: Look at requisitions where clients went silent after shortlist. What was the feedback (or lack thereof) when they finally responded? Was it confusion? Disagreement on quality? Lack of time?

- Survey Your Recruiters: Ask: When clients don't respond to shortlists, what do you think is the most common reason? Is it unclear next steps? Perceived subjectivity? Lack of trust?

- Review Your Current Shortlist Format: What information do you currently provide? Is it just names and resumes? Do you include any scoring, ranking, or insights? How subjective is it?

- Identify the Core Issues: Is it lack of clarity? Perceived bias? No clear next step? Failure to build trust?

Phase 1: Implement Transparent, Job-Relevant AI Scoring (Weeks 2-5)

- Define What Success Looks Like (If Not Already Done): Collaborate with hiring managers (or use data from past placements) to create a clear "Success Profile" for each role type: What skills, experiences, and traits are truly necessary for success? What would a high performer look like?

- Choose or Build Your AI Scoring Model:

- For Skills Match: Use NLP-based semantic matching (e.g., Sentence-BERT, fine-tuned BERT) to compare resume content to the job description. Output a score (0-100) representing conceptual alignment.

- For Experience Relevance: Use models that weigh the recency, duration, and relevance of past roles/projects to the JD's requirements.

- For Learnability/Potential: Use models that analyze career trajectory, project complexity, or indicators of self-directed learning (e.g., contributions to open-source, certifications earned).

- For Behavioral Traits (If Applicable): Integrate scores from validated assessments (SJTs, ability tests) if used in your process.

- Key: Ensure the model is trained on relevant data and, crucially, audit it for bias (e.g., check if scores systematically differ by gender, ethnicity, or educational background for comparable candidates).

- Design the AI-Enhanced Shortlist Format: Create a standardized template that includes:

- Candidate Name, Current Role, Location

- AI Scores: Skills Match, Experience Relevance, Learnability (etc.)

- Brief, Evidence-Based Rationale for Each Score (1-2 sentences): e.g., "Skills Match: 85 - Strong semantic match to JD on 'AWS', 'EC2', 'S3', 'IAM' based on 3 years as AWS Solutions Architect."

- Key Strengths and Potential Gaps (based on scores and resume): e.g., "Strength: Deep AWS infrastructure experience. Potential Gap: Less exposure to Kubernetes-based orchestration."

- Recommended Next Steps (based on scores and role needs): e.g., "Recommend technical interview to validate AWS skills and explore cloud cost optimization experience."

- (Optional) Recruiter Holistic Insight: A brief note based on structured interview or work sample (e.g., "Recruiter Note: Candidate demonstrated strong problem-solving in the technical screen, aligning with the high learnability score.")

- Pilot the New Shortlist: Use it for a few live requisitions. Track:

- Time to create the shortlist (should be faster due to automation)

- Recruiter feedback on ease of use

- Initial client reactions (if they respond)

Phase 2: Build Trust Through Transparency and Collaboration (Weeks 6-9)

- Share Your Scoring Methodology (Simply): Don't just send the score-explain how it was derived in plain language:

- "Our AI model evaluates skills match by comparing the meaning of your resume to the job description using semantic similarity-think of it as measuring how closely your experience aligns with what we're looking for, not just counting keywords."

- "Scores are on a 0-100 scale, where 80+ indicates strong alignment, 60-79 indicates moderate alignment with potential for growth, and below 60 suggests significant gaps."

- "We regularly audit our model to ensure it's fair and accurate-here's a summary of our latest bias check."

- Invite Client Feedback on the Scores: Make it clear that the AI score is a starting point for discussion, not a final verdict:

- "We see Candidate A scoring 82 on skills match-where do you see their strongest AWS experience in their resume?"

- "Candidate B scored lower on learnability-does their background suggest a different learning trajectory we might be missing?"

- "Does this scoring align with what you're seeing in the candidate pool?"

- Use the Scores to Guide the Conversation: Shift the recruiter's role from "here are the candidates" to "let's look at what the data tells us."

- Focus on gaps and strengths: "The AI suggests Candidate C has strong technical skills but lower exposure to agile methodologies-would you like to probe that in the interview?"

- Use scores to prioritize: "Given the scores, we recommend focusing interview time on Candidates A and B for technical depth, and using Candidate C to explore potential and motivation."

- Treat discrepancies as collaborative refinement: "You think Candidate D's SQL skills are stronger than the score shows-let's look at their resume together to see where the model might have missed something."

- Document and Share Outcomes: After the hire (or if the search ends), share how the AI scores correlated with the outcome:

- "For this role, the candidate we hired scored 88 on skills match and 76 on learnability-both in the strong range."

- "We noticed that candidates who scored below 60 on skills match tended to struggle in the technical screen-this helps us refine our threshold for future searches."

Phase 3: Embed, Refine, and Scale (Ongoing)

- Make AI-Enhanced Shortlists Standard: Require the use of AI scoring and the transparent shortlist format for all requisitions of a given type.

- Track Engagement Metrics: Monitor:

- Time from shortlist submission to first client feedback

- Percentage of shortlists that receive a response within 48 hours

- Quality of client feedback (is it specific, actionable, and collaborative?)

- Reduction in follow-up emails/calls needed to get a response

- Client satisfaction with the shortlist format (via periodic surveys)

- Refine the Scoring Model: Based on client feedback and placement outcomes, adjust:

- The weightings of different scores (e.g., if learnability proves more critical than initially thought)

- The thresholds for tiers (e.g., what score constitutes a "strong" match)

- The specific skills or traits the model emphasizes

- Expand the Use of Insights: Use the AI scores not just for shortlists, but for:

- Proactive market intelligence: "We're seeing that candidates with this skill set are scoring lower on learnability than expected-suggesting a potential training need."

- Internal talent mobility: Matching employees to internal gigs or projects based on skill scores.

- Offer justification: Using scores to demonstrate why a candidate is worth a certain salary band.

- Foster a Culture of Partnership: Position the AI-enhanced shortlist as a tool for collaboration:

- "We're not here to tell you who to hire-we're here to give you a clear, objective view of the talent pool so you can make the best decision for your team."

- "Let's use this data together to understand what's available and what trade-offs we might need to make."

Conclusion: AI Scoring as the Antidote to Silence

Client ghosting after shortlists isn't just a nuisance-it's a symptom of a breakdown in communication, trust, and clarity.

When the shortlist fails to provide a clear, objective, and actionable view of the talent pool, it becomes easy for the client to disengage-not because they don't care, but because they don't know how to engage constructively, or because they don't trust the information provided.

AI scoring, when implemented as part of a transparent, structured, and collaborative process, doesn't just automate evaluation-it transforms the recruiter-client conversation.

By replacing subjective, opaque assessments with transparent, data-driven insights, it gives clients a clear framework for evaluating candidates, reduces the cognitive load of engagement, builds trust through visibility, and makes the next step obvious.

The true power of AI scoring isn't in the algorithm itself-it's in what it enables: a conversation where the client doesn't have to wonder if they can trust the recruiter's judgment, but can instead focus on what the data tells them about the candidate's actual capabilities.

It turns the shortlist from a report that gets lost in the inbox into a tool that invites collaboration, reduces ambiguity, and makes it significantly harder for clients to disengage without consequence.

In an industry built on relationships, there is no greater competitive advantage than being the agency whose shortlists clients look forward to opening-because they know they'll find clarity, insight, and a genuine partner in the search for great talent.

Start not by buying an AI model, but by asking: "What does my client need to see to feel confident, informed, and ready to act?" Then, use AI scoring to give them exactly that. The silence will break-and the conversation will finally move forward.