5 Reasons: Resume Screening Fails at Scale

Resume screening at scale breaks in high-volume hiring. Discover why manual resume screening fails and how interview-first screening improves shortlist quality and speed.

Table of Contents

Introduction

In a world where a single job posting can attract thousands of applications, turning to technology feels less like an innovation and more like a necessity.

The siren song of AI-powered resume screening is powerful: instant sorting, reduced workload, and the promise of data-driven objectivity.

Yet, on professional networks like LinkedIn, you'll find a growing murmur of dissent - hiring managers suspicious of the 'perfect' candidates the system surfaces and candidates feeling like their applications vanish into a digital void, never to be seen by human eyes.

This friction points to a deeper truth: automated resume screening, when scaled without careful consideration, often breaks in predictable and problematic ways. At its core, the challenge is one of translation. We are attempting to reduce the rich, nuanced story of a person's career into a set of data points a machine can process.

The goal is laudable - efficiency and reach - but the path is fraught with technical and ethical pitfalls that can undermine the entire hiring process. For start-ups racing to build teams and hiring managers tasked with finding genuine talent, understanding these failures is the first step toward building a better, more equitable system.

In this article, we will explore the five key reasons why standard resume screening fails at scale: algorithmic bias, a crippling lack of transparency, the "garbage in, garbage out" peril of poor training data, the superficiality of keyword-based matching, and the critical error of automating away essential human oversight.

2. The Impenetrable "Black Box":

A Crippling Lack of Transparency

When a hiring manager receives a shortlist of five candidates from a pool of five thousand, a fundamental question arises: "Why these five?" With many commercial resume screening tools, a satisfactory answer is impossible.

These systems often operate as "black boxes," where the internal decision-making logic is proprietary and opaque.

This lack of transparency is not just an inconvenience; it cripples the hiring process in several ways.First, it destroys accountability.

If a highly qualified candidate is inexplicably rejected, there is no way to audit the system to determine if the cause was a bug, a bias, or a simple misreading of the resume format. Second, it erodes trust. Hiring managers are asked to rely on a tool they cannot interrogate, which can lead to second-guessing and a reluctance to adopt the technology fully.

Finally, and most critically from a legal and ethical standpoint, opacity makes it impossible to prove fairness. In regions with growing regulations around algorithmic fairness, such as the EU's AI Act, the inability to explain an automated decision could expose a company to significant compliance risks and legal challenges.

The market perception, often voiced on social media, is one of frustration - a sense that important career decisions are being made by an unaccountable machine.

3. Garbage In, Garbage Out: The Peril of Inadequate Training Data

The performance of any AI system is fundamentally constrained by the quality, quantity, and diversity of the data it was trained on. This is the classic "garbage in, garbage out" principle, and it is acutely relevant to resume screening. An algorithm is only as good as its training set. If the underlying data is flawed, the output will be too. The challenges with training data are multifaceted.

Volume:

Many systems require vast amounts of labelled data (resumes tagged with hiring outcomes) to perform well. A startup with limited hiring history may not have this, leading to poorly calibrated models.

Diversity:

If the training data comes from a non-diverse workforce, the model will not learn to recognise the signals of talent from different backgrounds, educational paths, or career trajectories. A model trained solely on resumes from Ivy League graduates will struggle to accurately assess the potential of a brilliant self-taught developer or a candidate from a lesser-known state university.

Relevance:

The data must be relevant to the specific context. Using a generic, off-the-shelf model trained on data from a different industry or country can lead to catastrophic misinterpretations of skills and experiences. Building a robust screening tool requires a deliberate and ongoing effort to curate a training dataset that is representative, comprehensive, and clean - a non-trivial task for any organisation.

4. The Keyword Trap: Over-reliance on Superficial Signals

One of the oldest and most pervasive methods of automated screening is keyword matching.

While simple to implement, this approach is notoriously flawed and a primary reason for screening failure at scale. Keyword-based systems scan resumes for the presence of specific terms (e.g., "Python," " Agile," "AWS") and rank candidates based on keyword frequency or density.

This method creates two major problems: false positives and false negatives.

False positives

occur when candidates "keyword-stuff" their resumes to game the system. An applicant might list every technology they've ever heard of, making them appear highly qualified on paper while lacking genuine depth in any key area. They pass the automated screen but fail miserably in the technical interview, wasting everyone's time.

False negatives

Are arguably more damaging. A highly qualified candidate might describe their experience with Amazon Web Services as "cloud infrastructure management" without using the exact acronym "AWS."A rigid keyword scanner would miss this candidate entirely, potentially excluding the best person for the job. This approach ignores semantics, context, and the richness of human language, reducing a complex skillset to a simplistic and easily manipulated checklist.

5. The Human Vacuum: Automating Away Essential Oversight

In the pursuit of efficiency, some organisations make the critical error of attempting to fully automate the screening process, creating a "human vacuum."

They assume the AI's output is the final word. This is a fundamental misapplication of the technology. Automated resume screening should be a tool to augment human judgment, not replace it.Human oversight is essential for several reasons that machines currently cannot replicate.

Evaluating Soft Skills:

A resume can hint at soft skills like leadership or communication, but a human is needed to read between the lines and interpret the narrative of a career path.

Contextual Understanding:

A human can understand that a two-year career gap might be for parental leave or personal development, whereas an algorithm might see it only as a red flag.

Catching Edge Cases:

Automated systems are poor at handling unusual resume formats, non-traditional career progressions, or extraordinary achievements that don't fit a standard pattern. Without a human in the loop to catch these nuances, the system's mistakes-whether from bias, poor data, or keyword folly-become permanent, and excellent candidates are lost.

The most effective screening processes use automation to handle the initial volume reduction but reserve final shortlisting for human experts who can apply empathy, context, and strategic thinking.

Conclusions: Towards a More Equitable and Effective Process

The failures of resume screening at scale are not a indictment of technology itself, but a warning about its careless implementation. The path forward requires a deliberate and balanced approach.

- AI is a Tool, Not a Panacea: The primary learning is that automation must be applied judiciously. The goal is to build a hiring process that is both efficient and humane.

- Transparency is Non-Negotiable: Companies must demand explainable AI from their vendors or build in-house systems where key decisions can be audited and understood. Trust cannot be built on opaque foundations.

- Data Quality Precedes Model Quality: Investing in the creation of diverse, representative, and high-quality training data is the most critical step toward building a fair and accurate screening system.

- Move Beyond Keywords: Effective screening requires semantic and skills-based matching that understands context and synonymy, moving us closer to evaluating a candidate's actual capabilities rather than their vocabulary.

- Humans Must Remain in the Loop: The final decision-making authority, especially for nuanced roles, must involve human judgment. The ideal system empowers recruiters by handling the drudgery, freeing them to focus on what they do best: evaluating talent.

Future Directions

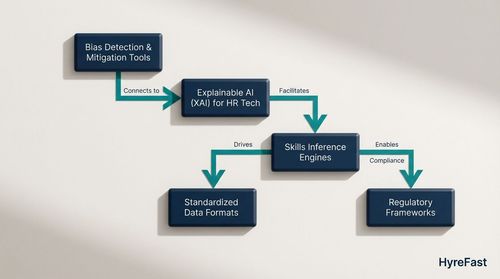

The evolution of resume screening will likely focus on mitigating these core failures. Key areas of development include:

- Bias Detection and Mitigation Tools: The development of more sophisticated, real-time tools to audit models for bias and automatically adjust scores to promote fairness.

- Explainable AI (XAI) for HR Tech: A push for greater transparency, with vendors providing "reason codes" for why a candidate was ranked highly or poorly.

- Skills Inference Engines: Moving beyond resumes to develop AI that can infer skill proficiency from project portfolios, GitHub activity, and online certifications, creating a more holistic view of a candidate.

- Standardised Data Formats: Industry-wide efforts to create structured, machine-readable resume formats (like JSON resumes) to reduce parsing errors and create a more level playing field for all candidates.

- Regulatory Frameworks: As governments catch up, we can expect more defined regulations around the use of AI in hiring, which will force a higher standard of accountability and fairness.

By understanding the technical and ethical pitfalls, startups and hiring managers can move beyond the hype and implement screening processes that truly scale with intelligence and integrity.

The future of hiring lies not in removing the human element, but in using technology to make human decision-making more impactful.